Streamlining SQL Creation Using GitHub Copilot Agent!

Introduction

Hello, this is Hirata from the Analysis Production Group!

As an analyst, I’d like to talk about how to streamline the SQL creation tasks I handle every day.

In this article, I will talk about how I used Github Copilot Agent and "Python" to streamline the task of writing complex SQL consisting of hundreds of lines, its trial-and-error process and results, and future improvements.

【Summary】

✔︎ Preparing table information in advance and having the generative AI create SQL

✔︎ Implementing a system to automatically execute and check the created SQL using Python

✔︎ Having the AI automatically fix errors upon their occurrence to improve work efficiency

Background: Daily SQL Creation Tasks and Their Challenges

I face the following problems daily:

-

Complicated interactions with the generative AI

It was necessary to repeatedly explain table information, data types, date formats, and so on to the generative AI each time, which was a time-consuming task. -

Creation of massive SQL

I have to write hundreds of lines of SQL for tasks such as extracting users for marketing purposes or creating data for analysis, with complex processing logic scattered throughout. -

Repeated trial-and-error (Loop)

The repetitive cycle of copying and executing the generated SQL, and when an error is encountered, I forward the error log to request a correction…this has become a bottleneck.

If I fix myself, differences from the latest version created by GitHub Copilot arise, and when I request the next fix, it sometimes reverts to a previous state.

Trial and Error! Building an Automated Workflow Using Generative AI and Python

I sought to enhance work efficiency by adopting the following process.

Overview of the Automation Flow

-

Registration of preliminary information

I compile the structure of each table, data types, sample data, sample SQL, and processing tips into respective prompt files. -

SQL generation using generative AI

I give the generative AI a prompt describing the full flow: 'Generate SQL based on table info, save it, and verify execution,' and it automatically produces the SQL file. -

Execution and checking with python

I execute the generated SQL using a Python script. If an error occurs, I feed back the error log and prompt automatic correction.

Key Points of the Approach

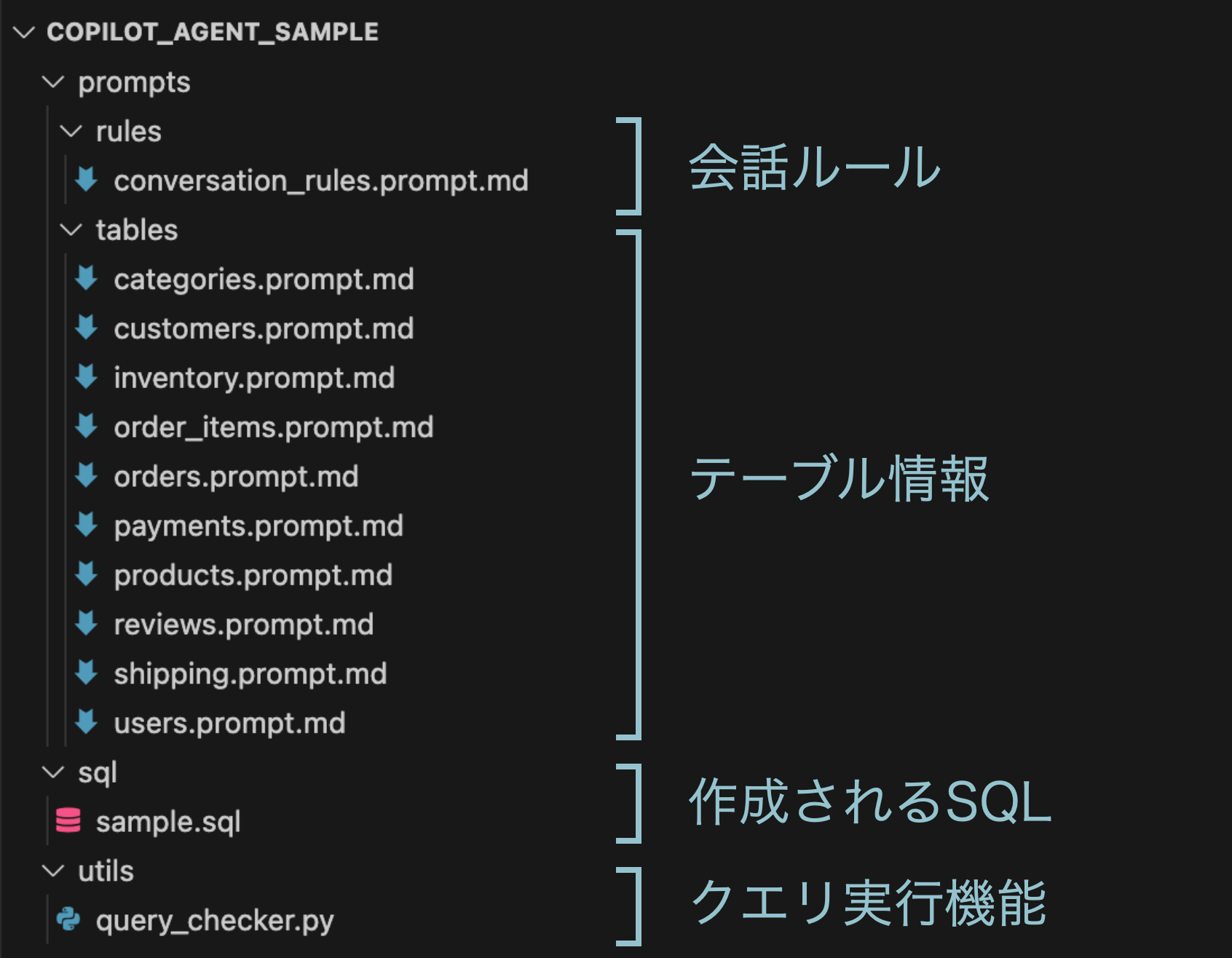

Below are the directory structure and example files I actually built:

-

rules / conversation_rules.prompt.md

Basic conversation rules Generate and save SQL based on table information, then execute the Python file to check whether the SQL is correct. Also, describe the rules for SQL creation and the preferred conversation style. -

tables / <table_name>.prompt.md

Enter table information. By including sample data, have the generative AI judge the characteristics of the data.## テーブル名 users ## 説明 ユーザー情報のテーブル。 user_idをキーにorderテーブルと紐付け可能 ## sample SQL ```sql select user_id as "顧客ID", name as "顧客名", address as "住所" from users ``` ## sample data | 顧客ID | 顧客名 | 住所 | | ------ | -------- | -------------------------------- | | 1 | 山田太郎 | 東京都新宿区西新宿 | | 2 | 佐藤花子 | 大阪府大阪市中央区難波 | | 3 | 鈴木次郎 | 愛知県名古屋市中区栄 | -

sql /

Have the generated SQL saved as a file. -

utils / query_checker.py

After creating the SQL, execute this Python file to check whether the SQL is correct.

Example of Actual GitHub Copilot Agent Response

Here’s a simple example to check its actual operation.

[in] Write an SQL query to count how many emails were sent this month, grouped by user.

[out]

First, let’s check the schema information, then create an SQL query to analyze email delivery.

We’ll look for table information related to email delivery.

"› Searched for files matching /prompts/tables/**, 14 matches"

We’ve located schema information related to email delivery history!

Let’s review the details and write an SQL query to extract the number of deliveries made this month.

Read メール配信履歴.prompt.md

Schema information has been confirmed. It appears the data is stored in a table named [メール配信履歴テーブル] (Email Delivery History Table).

We’ll now create an SQL query to aggregate the number of email deliveries for this month (April 2025).

Key columns in this table include:

• email_id: メルマガID

• delivery_timestamp: 配信日時

• delivery_status: 配信ステータス

Now, let’s create the SQL query to aggregate the number of email deliveries for this month and save it to a file named メール配信集計_今月.sql.

This SQL query will:

・ Filter emails delivered between April 1 and April 30, 2025

・ Include only rows where delivery the status is "Delivered"

・ Calculate the number of deliveries, successes, failures, and total deliveries per user

・ Display overall totals at the end

Would you like to validate the query using Python?

Let’s run the SQL we just created for verification:

python3 query_checker.py メール配信集計_今月.sql

SQL validation complete! The query was successfully validated and is executable.

The test run showed no syntax errors and confirmed a correct execution plan.

Results and Future Prospects

Fortunately, the entire process operated smoothly, marking the first step toward automating SQL creation!

That said, the process is not perfect. What challenges and improvements should I work on?

● Current Challenges

-

Review workload

I tend to miss errors more easily when reviewing AI-generated SQL compared to writing SQL manually.

I am still in the trial-and-error stage of developing a verification mechanism and methods that would enable even non-SQL users to effectively review queries, and I hope to improve this going forward. I also hope that advances in generative AI will help address these challenges! -

Checking whether data has been extracted as intended

There are cases where the requirement definitions are incomplete or I fail to verbalize the information in my mind accurately, making it difficult to automatically determine whether the processing aligns with my intent. There is still room for improvement in conveying subtle nuances and intentions.

● The Next Challenges

-

Automation of number check

As a first step in sophisticating reviews, I would like to implement a function to check whether the number of extracted items is as I intended. -

Accumulation of data processing methods that can be called the "secret sauce"

I want to keep adding to the prompts effective data processing techniques that become more obvious as I use them more. -

Expansion to analysis automation

Ultimately, I aim to create a system that can automate, to some extent, the workflow from SQL creation to analysis of extracted data!

関連記事 | Related Posts

We are hiring!

シニア/フルスタックエンジニア(JavaScript・Python・SQL)/契約管理システム開発G/東京

契約管理システム開発グループについて◉KINTO開発部 :66名・契約管理開発G :9名★←こちらの配属になります・KINTO中古車開発G:9名・KINTOプロダクトマネジメントG:3名・KINTOバックエンド開発G:16名・KINTO開発推進G:8名・KINTOフロントエンド...

生成AIエンジニア/AIファーストG/東京・名古屋・大阪・福岡

AIファーストGについて生成AIの活用を通じて、KINTO及びKINTOテクノロジーズへ事業貢献することをミッションに2024年1月に新設されたプロジェクトチームです。生成AI技術は生まれて日が浅く、その技術を業務活用する仕事には定説がありません。

![Cover Image for [生成AI][Copilot] 非エンジニアの私がAIを使って運用ツールを開発した話](/assets/blog/authors/yamayuki/01.png)